Are you leaving employees better off or burned out?

Are you leaving employees better off or burned out? How do you lead in a world where roles are changing faster than people can adapt? In this episode, Rick Cheatham talks with Steph Peskett and Abi Scott about the second major trend from their recent research: human sustainability. The conversation covers everything from AI disruption to proactive talent strategies—and why the most forward-looking companies are rethinking how they grow, support, and retain their people.

Most of us want to lead in a way that matters; to lift others up and build something people want to be part of.But too often, we’re socialized (explicitly or not) to lead a certain way: play it safe, stick to what’s proven, and avoid the questions that really need asking.

This podcast is about the people and ideas changing that story. We call them fearless thinkers.

Our guests are boundary-pushers, system challengers, and curious minds who look at today’s challenges and ask, “What if there is a better way?”If that’s the energy you’re looking for, you’ve come to the right place.

Rick: Welcome to Fearless Thinkers, the BTS podcast. I’m your host, Rick Cheatham, and this is the second in a two-parter on some research that we’ve been doing around trends in talent management, specifically Steph Peskett and Abi Scott. Have gone deep into what great talent management looks like today. In the first episode, we focused on trust and power, and here we’re gonna go deep into human sustainability.

I guess it probably is time for us to start talking about that second trend a little bit. So why don’t you start with an overview and then we dig a little deeper.

Steph: Okay, I’ll try and ground us then in this idea of human sustainability. You know, in recent years there’s been an increasing focus on wellbeing and COVID was definitely an accelerator of that.

And I think the thing that’s really fascinating right now is that human sustainability is much more about something broader and more long term. It’s really about autonomy, growth, and employability. I absolutely love this topic because for me, human sustainability is so fundamental to making sure that every time we have an experience with an employee, whether that lasts for a year or decade or even longer, that they’re feeling that they’re being left better all of the time now.

The thing that’s fascinating in the research that we did is that only 43% of employees feel their organization have left them better off than when they started. And I find that absolutely staggering ’cause I think it, it just goes to the heart of what we’re trying to solve for in human sustainability.

And it’s not good enough. In my opinion.

Rick: Yeah. And again, this is the second time in this conversation that you’ve just shocked me because how on earth could organizations that are designed to drive continuous improvement have people walking away from that experience going, yeah, I might be worse off than I came in.

That’s a tough one. Obviously, there’s not a focus on sustainability at all in that environment.

Steph: It’s roughly half of workers, right? So, it leads us to, I think, really examine that really deeply held value of, you know, here for good, here for making a difference in the lives of the people that we touch in our employees, you know, in our consumers, in our customers.

All of those things are just so important, but it’s similar to that previous point where the thing that’s also disrupting the space is of course AI and it’s another turn of the screw, uh, really because digital has been disrupting the, uh, experience of workers automation and now we’re in the age of AI.

That too is also creating a disrupt in terms of that sustainability, quite literally, of humans and people are nervous. People are definitely scared and wondering what it will mean for them.

Rick: Wow. So how do we begin, Abi to build human sustainability into our fabric so that we don’t end up in the type of situation that Steph was just describing?

Abi: Mm, yes. Yeah, no, and I guess the thing is we can’t predict the future, and we’re not used to this speed of pace. You know, with AI. I think it’s akin to, I guess when we got the internet, when we got emails, you know, in the workplace with when we got calculators. It’s technology helping our lives. There is that moment where you go, you know, when will the robot take my job?

The World Economic Forum reported some statistics just in January where they suspect that 85 million jobs could be displaced by the end of 2025 due to AI. But on the flip side of that, the good news at 97 million jobs might be created because of AI. So, I think it talks to the nature of work changing.

I think it’s about working out how can we work alongside the bots? How can we get the bots to help us?

Rick: Yeah, so help me think about what else our listeners, what actions they could be taken. Again, whether it’s enterprise wide or even within my team and who I work in day in and day out to help create a.

More focused organization when it comes to human sustainability.

Steph: Yeah, well, I mean, this is like my favorite topic, so I should get onto this. Like, I’m just so passionate about technological advances like this, and I love the age that we’re living in, and I think it’s really about how do we enable this?

To be one of the most spectacular periods in human growth that we could possibly imagine. And that really excites me. So I think, for example, if I think about, you know, picture yourself as a leader operating in a business today. Great news is that you have content help now, right? So that’s helpful. And that should take some things away from the burden of leading people and the challenges of that and instead focus on the wonderful opportunity.

And that means, you know, creating community in the workplace so people feel they belong, helping individuals grow and having more time to apprentice them. Creating experiences in the work that. So memorable and special that you become the place that people wanna be. I think there’s some amazing things that can be done in terms of your personal leadership and refocusing your attention.

And it does require conscious refocus because like most things, it’s a change in routine and discipline. I think the other thing that really excites me about this is the ability for the organizations who are brave to start to have really proactive talent conversations. How is our workforce and the shape of our workforce changing as a result of the advent of AI and HR really need to be leading on that conversation.

I know we’re a little scared and we might get disrupted too, but we have the ability to be really transparent with people and to start to look at re-skilling people in proactive ways. In the banking industry. It happened probably about 10 years ago. Branches were shutting everywhere and we were moving to digital and there was this real question of whose responsibility is it to re-skill?

Well, that’s not really coming out as loudly now, but. The beauty is that AI will disrupt us, but with fantastic tools like conversation tools for AI, we will be able to re-skill people in the flow of work. We’re just transparent about how is this changing? How will you be impacted? How do we keep you growing?

How do we keep you employed? How do we bring forward your beautiful human skills to sit alongside these incredible technology capabilities we now have.

Rick: Ah. So now my worlds are colliding. I’ve got trend one, I’ve got trend two. The thing that I often say about AI is we have to focus on our leadership and culture ’cause tools are gonna change faster than we can deploy them. And I think you’re saying something relatively similar. And so how am I transparent? How am I building trust when I don’t know? So, you know, as we talk about the sustainability and building trust kind of in the same breath, how does that work in this AI specific case?

Steph: I guess, you know, like most change, we have to start with ourselves, right? What’s my readiness for it? How do I feel about it? Am I looking to ride this thing out or am I gonna get out there in the front of it and get curious and start experimenting? Okay, so none of us are perfect in this and none of us.

Know all the answers, but I think it does start with experimentation and I think it starts with partnering up and, you know, challenging into our people functions to help us to get there. You know, this could sit in no person’s land in an organization unless, you know, it’s really claimed by the leaders and by our executives and our HR functions and everyone, you know, the beauty of AI is at everyone’s fingertips to, to get going, start experimenting.

Call a friend. What do you think, Abi? What do you reckon?

Abi: I totally agree. At BTS, we talk about, you know, executing strategy is about alignment, mindset and capability, and that relates to AI, you know, like how aligned are you as an organization towards. AI and new technologies, I guess, because it’s not just AI that will have an impact.

What mindsets do you as a leader have? What do your team members have? What’s the organizational mindset and then what skills do people have around AI? Internally at VTF, we’ve got stories of partners who are being apprenticed and mentored by very young talent who have extreme expertise in AI. Also as well, major upskilling programs so that we know how to better utilize AI in our work.

So, I think leadership does become more of a partnership model overall. So it’s okay for the leaders not to have all of the answers. Um, you know, you problem solve, and you work through it as a team and, and as an organization. So, I wonder that’s a little bit of a shift perhaps in how we might work going forward.

Rick: That’s great. That’s great advice. So again, I kind of want to continue down this road of tying these two trends together, so to speak. I also sit here and think, alright, most leaders, most organizations, hopefully all, but I can’t say all are going, you know what? We don’t really care if our employees trust us.

We don’t really care if they feel like talent development’s a black box. What we really want to do is build a completely unsustainable organization where people feel like they’re being run into the dirt. If no one wants to do that, I’m always curious as to, you know, what your research might say of the well-intentioned folks that are doing anyway.

How are organizations breaking trust? How are organizations potentially making choices that. Aren’t promoting human sustainability but instead detracting from it. So, what’s the shadow side, so to speak, of what we’ve been talking about right now?

Steph: Look, I think the thing that organizations are doing that breaks trust, that I think we need to get really honest about is where we, as the owners, founders, operators, executives, whatever, in an organization, are not doing the hard yards to really define what we mean.

In a transparent and explainable way. So, it’s my opinion that any policy, any approach to talent, any approach to, you know, things like the working from home and all that stuff, it needs to be simple, clear, and transparent and explainable and. That is a responsibility we all have to employees. So how is it we can go from highly productive workforces during COVID when they worked from home to now an assumption that you are more productive if you’re in the office.

You know, we need to be evidence-based. We need to be clear and transparent about why we know that is better. After COVID, there was a lot of, uh, studies and questions done about how do you define productivity and white collar workers, right? Knowledge workers, easy and blue collar, but not so much in white.

Well, that’s never really been answered very clearly. And still we persist with policy changes that are confirmed on a hunch. Right. Same with hiring, same with succession, same with high potential, same with promotions. And I think we’ve all gotta do the hard yards to make sure it’s clear, transparent, explainable, and if it is great, go for it.

Abi: Yeah. So, if a leader can understand. Each member on their team, if they know what motivates that individual, if they know their preferences are ways of working, then you know, you obviously gotta make sure that the processes are, are fair and equal for all, but being able to tailor communication, tailor ways of working to that individual to get the best out of each individual.

There’s something in that and I wonder whether during COVID. We were more focused on individual differences and the needs of each person in our team. And now we’ve just gone back to this sort of whole of organization cohort level approach.

Rick: And that’s actually very interesting observation that we were, so again, everything was so wellbeing focused and so individualized.

We possibly, like I was saying earlier, possibly have swung too far back the other way. Well, I want to thank you so much for spending this time with me today, and I also want to thank you for doing this great research and narrowing it down to two things that we go do. So many times, everything’s over complicated.

So very, very much appreciated both your time and your thinking, and I’m sure we’ll have you back again soon.

Abi: Thank you for having us, Rick.

Steph: Appreciate it.

Rick: Thanks for joining me today. It’s always a pleasure to bring to you are fearless. Thinkers. If you’d like to stay up to date, please subscribe. Bios for our guests and links to relevant content are always listed in the show notes.

If you’d like to get in touch, please visit us at bts.com and thanks so much for listening.

Related Content

In Part 1, I told you about the three decisions we made two years ago and the simulation flywheel that produced our first Applied AI diamond.

Here’s the field-notes version.

Over 80% of our global business have now adopted a new Applied AI approach for doing simulations in the first eight weeks, across 24 countries and every practice.

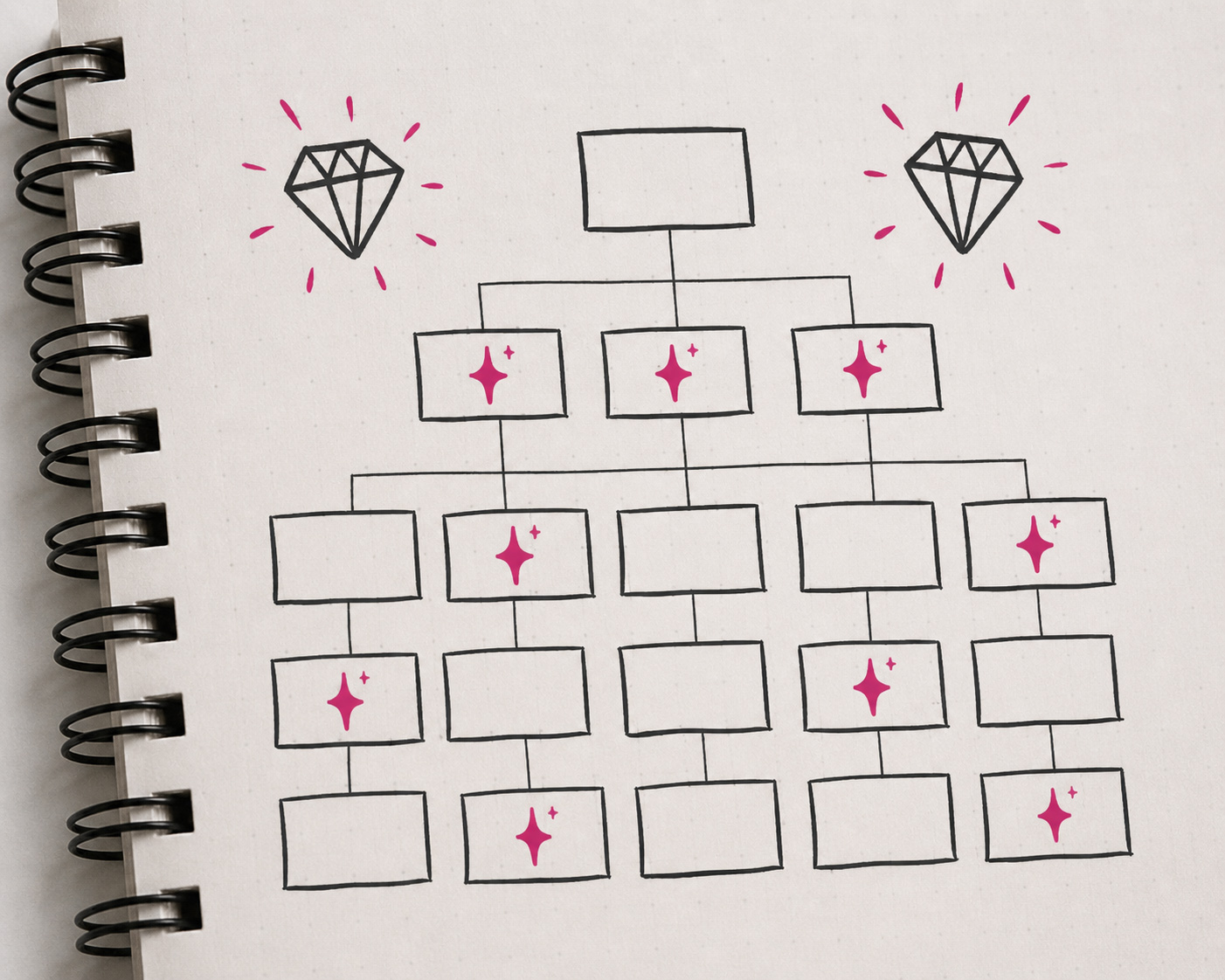

The flywheel didn’t stop with simulations. It moved into finance, sales enablement, legal, operations, and client delivery. Teams started building agents and bringing them onto their own org charts. We didn’t plan for any of that. We built the conditions for people to find their own breakthroughs.

What it felt like inside the flywheel.

When the simulation team went live with their first clients on the new way of working, the lead person hit a wall. Their words:

“You’re asking too much. You’re making me be a full-stack developer. Up until this point I did a small part, and I sent it to the team, and they built off the back end, and they brought it back. And now I have to end-to-end soup to nuts, basically alone.”

There was graphic UI work nobody had been trained for, the fear of delivering quality below what BTS expects of itself, and the weight of not having a playbook. This was not the joyful adoption story most consultancies tell.

Then something shifted. Six members showed up for product testing, where the usual was two or three. The work created teamwork I hadn’t seen at BTS in years. The breakthrough was not an instantaneous change from skepticism to celebration. It was a breakdown in confidence, then rally, then bonding. If we didn’t make room for the breakdown, we would have lost the rally.

The other breakthrough was global teamwork; not yet a BTS core strength. Our culture is beautiful: high-freedom and entrepreneurial. But people’s first identities are to their countries. Almost every prior attempt we’ve made at a global initiative has failed. The one exception was Covid. So, when I say what happened next surprised me, I mean it.

I asked to join the simulation team’s Slack channel rather than pulling them into status meetings. What I got to watch in the mornings was someone in South Africa waking up, posting “I tried this and got stuck,” then London adding on, then San Francisco weighing in, then a surprise breakthrough overnight from Tokyo. We didn’t engineer that. Curious and determined BTS’ers did. The problem was interesting enough that the org chart didn’t matter. It was amazing to see and a glimpse into the next evolution of the BTS culture.

The pattern: Explore, expand, institutionalize, renew.

What we’ve now seen play out, both inside BTS and with clients, follows the same four-step pattern. Each step asks a specific decision of the leader.

Explore.

Stay stubborn on the aspiration and fluid on the path. Our breakthrough wasn’t the path we originally took. We changed tools and approaches. Nobody could have foreseen that. And if the team had taken the first six months of learnings from AI as their definitive “this is the detailed path we will follow,” we never would have gotten the disruption. Five different tool combinations were tried before we found the one that worked. Companies that lock into a single path or tool too early are betting against compounding capability that doubles roughly every seven months. That is not a bet I’d take.

Expand.

Run the old way and the new way side by side. When the simulation team’s breakthroughs got real, the instinct was to retreat into more internal testing. We did the opposite. They ran old way and new way in parallel on 6 or 8 live client projects across all three geographies. Every single one ended up going live the new way. The backup was always there. They didn’t need it.

Institutionalize.

Burn the boats. The simulation team committed that no new client work would be done the old way after January 1. The other practice leads then committed to dates within Q1, even though most of them had not yet experienced the new way themselves. They had to trust their colleagues. If you can do it for the most complex thing, you could probably do it for the less complex ones. By February 15, we had approaching 90% global adoption across 24 countries, across all practices. I was shocked and proud. We had spent years failing at exactly this kind of global rollout.

Renew.

Treat your agents as contractors. People on our diamond teams are now managing 30+ agents they built themselves. Our teams give agents performance feedback. We terminate their contracts when they don’t deliver. We expand the responsibility of agents when they outperform. The frontier question we’re wrestling with now is token budgeting. Two friends of mine running engineering-heavy companies believe that within 6 - 9 months, their token cost per engineer will exceed the cost of the engineer. Whether that’s the right framing is open. The question is real, and every CEO will be asked some version of it within the year.

What had to be true for this to scale.

Once we achieved this amazing global innovation, the leadership sat down to figure out what made it work. We named five things. None of them were about the technology.

Real pain points as the starting point. We had so many people frustrated from those ways of working, all the back and forth and all the wasted time, that this was gold for them. The old way was already painful. The new way wasn’t a forced disruption; it was relief. Find the workflow where the pain is loudest and start there.

The diamond unlocked creativity, it didn’t constrain it. This was the most differentiated insight, and the one most leaders miss. It wasn't "here's the new tasks and rules." It was, "once you learn how to do this, the sky's the limit. You can be even more creative." If your rollout feels like a new set of rules constraining your people, you’ve built the wrong thing.

Pair deep expertise with fresh eyes. The disproportionate share of our breakthroughs came from a tenured tinkerer with total command of the work, paired with someone new to the role who hadn’t yet built the muscle memory of how it had always been done. Without that pairing, you get incremental improvements to the work you already know how to do, instead of a reinvention.

Refuse the “people are too busy” reflex. When I brought the rollout to the global leadership team, the excuses came fast. “Our people are too busy. They’re burnt out. Q1 is going to be busy. No one’s going to have time.” My response: “This is a chance to eliminate the tasks you dread and expand what you love. I know it is a short push of extra work, and I think after the fact you and your team will feel joy and pride and say it was the best time we ever spent.” This is the moment most AI rollouts die.

Senior leaders must lead by example and do the work themselves. This is not middle manager’s job. This is not something you delegate. Even though you don’t build simulations anymore, you must know what this is. One of our partners proactively put time on senior leaders’ calendars and forced them to do the work. Once they started building, the excitement grew, and they could advocate for the rollout because they understood it. If your executives haven’t put their hands on the keyboard, you don’t have a rollout. You have a memo.

What we’re seeing across clients.

We’re now running this play with client organizations across industries and geographies. The companies whose flywheels are accelerating paired their A-players with their early-career talent, pulled IT and legal into the working sessions, refused the “too busy” reflex, and put their senior leaders’ hands on the keyboard. The companies whose flywheels are stuck almost always have a leadership pattern at the center of the stall. Not a tooling pattern. Not a governance pattern. A leadership pattern.

If this resonates, let’s talk.

If you read Part 1 and asked yourself whether your flywheel was turning, the question I’d add now is sharper: do you have the conditions in place for a diamond to appear? If yes, you’re already moving. If no, the technology will not save you.

Here's where we're starting with clients: a working session, half day to a full day, with a small group that owns one of your highest-friction processes. Together we map where your first diamond is most likely to land, how to set up the side-by-side trial, and what your version of "burn the boats" should look like.

The destination, if we do this right, is a self-reliant culture of applied AI inside your company. 5, 10, 15 diamonds compounding into a fundamentally different way of operating. From what I have experienced this is a once in a career opportunity for dramatic shareholder value creation if you get that muscle going. I say that because I'm watching it happen, in real time, inside our own company and across our client base.

If you want to get your flywheels spinning and map your first diamond, start here. Bring your hardest workflow. We'll bring the playbook.

Three decisions that changed everything.

Two years ago, we made three deliberate decisions about how BTS would move with Applied AI.

We would become our own Customer Zero.

While others were building strategies, defining governance, and waiting for clarity, we made a different call: we decided not to wait. Not because the stakes were low, but because they were high. And because in a space evolving this quickly, clarity wouldn’t come from planning. It would come from movement.

So instead of starting with a roadmap, we started with three principles:

- No top-down mandate. The people closest to the work figure it out.

- IT must evolve from gatekeeper to enabler - leading AI trials and fast experimentation.

- Don’t wait for certainty.

We set the organization in motion, and once we did, things started to move quickly.

What if we started this company today?

Waiting for certainty is itself a choice, and it’s costing companies more than they realize.

We started where we knew the work best: our simulations. No perfect plan, just teams moving, trying, and iterating.

Simulations are core to who we are at BTS. Companies that simulate don’t just make better decisions; they execute faster and build more engaged cultures.

The team asked a simple question:

"What if we were to start our company today?”

That question started the flywheel.

They asked IT for a few licenses and started building - vibe-coding, writing agents, and testing tools - moving at a pace that would make any VC-backed start-up smile.

The messy middle.

At first, the team was underwhelmed.

The early reports were blunt:

“Not good with math.”

“Poor graph capabilities.”

The team wasn't discouraged. They kept tinkering - jumping between tools, staying on top of new releases, experimenting constantly.

This was a small team, across 24 countries, building off each other’s ideas. Laughing at crazy creations. Breaking things. Iterating in a sandbox alongside real clientwork.

Each cycle produced something:

- A sharper scenario

- A faster build

- A more powerful simulation

The flywheel was turning, and it was generating something real.

When the diamond appeared.

Then something shifted.

The team moved into client trials across five countries. They figured out ISO compliance and built the architecture to handle the complexity, the “spaghetti.”

And what emerged wasn’t incremental:

- What used to take weeks started happening in days.

- Limited creativity started to feel like unlimited innovation.

- Clients became self-serving.

- Agentic simulations were built directly into client systems for real-time updates and preparation.

This was our first AI diamond - a high-impact outcome created by many cycles of experimentation compounding into real value.

It only appeared because we kept the flywheel turning, each cycle increasing the odds that something would break through.

95% adoption in eight weeks.

Then it was time to take the AI diamond global.

BTS is decentralized and highly entrepreneurial. We operate across 24 countries and 38 offices, where local teams have real autonomy.

And historically? That’s meant a low appetite for adopting something built somewhere else and pushed from the center.

So we expected resistance.

Instead, something surprising happened.

In the first eight weeks, we saw 95% adoption across our global footprint.

It felt completely different from our own digital initiatives, ERP implementations, top-down rollouts of the past.

This moved on its own. Why?

We realized it didn’t start with a framework or a model, it started with a feeling.

The feeling of being at the leading edge of one’s craft and profession.

- Joy

- Excitement

- Pride

As we watched this play out across teams it stopped feeling like isolated wins.

There was a pattern to it. A repeatable, organic, innovation motion.

And the flywheel didn’t stop with simulations.

It spread across finance, sales enablement, legal, operations, and client delivery. Some cycles led to small improvements, and others revealed new diamonds.

Not becausewe planned for them, but because we built the conditions for people to find them.

The question I'd ask any CEO right now: Is your flywheel turning, or are you still waiting for the perfect plan?

In part 2, I’ll share the key success factors behind the breakthrough, and what we’re now seeing across more than 120 global clients.

1. La Conversación Ha Cambiado

Durante los últimos dos años, el debate sobre la Inteligencia Artificial ha estado impulsado principalmente por proveedores tecnológicos y firmas de consultoría que animaban a las compañías a acelerar su adopción.

Hoy la conversación es distinta. Son los mercados financieros y los analistas quienes formulan la pregunta clave:

¿Dónde está el retorno?

Los datos muestran que los mercados apenas han incorporado expectativas de mejora de beneficios impulsados por IA en la mayoría de las compañías no tecnológicas. Mientras unas pocas grandes tecnológicas concentran las expectativas, el resto del mercado permanece bajo presión para demostrar impacto real en resultados.

Esto ya no va de ‘hype’ ni de titulares. Va de crear valor real, medible y sostenible.

Y el diagnóstico es claro: el reto no es la tecnología, sino la adopción organizativa.

Ahí es donde está la verdadera oportunidad.

2. Las organizaciones están chocando contra un muro — y lo saben

Tras dos años de programas amplios de IA: licencias masivas, sesiones de “IA para todos”, campañas de concienciación; muchas organizaciones se hacen la misma pregunta incómoda:

¿Y ahora qué?”

Se han lanzado iniciativas. Se han hecho pilotos. Pero el salto hacia un impacto escalable y medible no termina de llegar.

Los equipos utilizan herramientas de IA para ahorrar minutos. Algunos pilotos permanecen en fase de prueba durante meses, incluso años, sin escalar. Y la transición desde la “concienciación en IA” hacia la “IA que genera resultados de negocio” se convierte en un terreno para el que pocas organizaciones estaban realmente preparadas.

El desafío no es empezar. Es escalar.

3. Por Qué Existe Escepticismo: La Realidad Operativa

Cuando analizamos lo que ocurre en la práctica, la realidad operativa ayuda a entender el escepticismo del mercado. En distintos sectores se repiten los mismos patrones:

- Muchas iniciativas de IA se quedan atascadas en el piloto y nunca escalan.

- Un porcentaje importante no consigue generar impacto medible.

- Se produce una “curva J” de productividad: una fase inicial de disrupción antes de que aparezcan los beneficios.

- La “Shadow AI”, empleados utilizando herramientas personales sin gobernanza, se está convirtiendo en la norma, con los riesgos asociados.

El factor limitante no es el acceso a modelos o herramientas.

Es la capacidad y adopción organizativa: procesos, roles, gobernanza, habilidades y disciplina en la generación de valor.

4. Qué Hacen Diferente Las Organizaciones Que Sí Están Escalando La IA Con Éxito

Las compañías que están consiguiendo escalar la IA no necesariamente tienen más presupuesto ni más talento técnico. Lo que tienen es mayor disciplina organizativa.

Hay tres elementos marcan la diferencia:

- Desarrollan capacidades para cambiar comportamientos reales.

No se limitan a solo concienciar. No basta con webinars genéricos de “IA para todos”. Construyen capacidades estructuradas y basadas en roles:

- Directivos capaces de gobernar la estrategia de IA.

- Managers que saben rediseñar procesos y formas de trabajo.

- ‘Power users’ que lideran la identificación y el desarrollo de casos de uso.

- Y perfiles técnicos que llevan esos casos desde la idea hasta producción.

- Construyen cultura de datos, no solo infraestructura.

Los pipelines limpios importan. Pero también importa que exista una comprensión y entendimiento compartido sobre calidad del dato, gobernanza y uso responsable de la IA.

Sin ambas dimensiones, las iniciativas alcanzan rápidamente un techo: técnicamente viables, pero organizativamente bloqueadas.

- Gestionan la IA como una cartera de inversión, no como una lista de proyectos.

Cada iniciativa tiene un caso de negocio.

Los casos de uso se cualifican antes de asignar recursos.

El ROI se mide.

No persiguen cada tendencia. Priorizan con rigor —y detienen lo que no funciona.

Estos patrones no son teóricos ni aspiracionales. Son observables. Y replicables.

5. El Modelo de IA de Netmind: De la Adopción al Impacto a Escala

En Netmind hemos diseñado un enfoque precisamente para cerrar esta brecha entre intención y escala.

Nuestro modelo de IA es un marco integrado para ayudar a las organizaciones a transformar el potencial de la IA en resultados medibles, trabajando de forma coordinada en tres dimensiones interdependientes:

Pilar 1 — Valor De Negocio: Hacer Que Cada Iniciativa Justifique Su Inversión

La IA sin un caso de negocio claro es solo experimentación.

Trabajamos con equipos de liderazgo para establecer una disciplina sólida de generación de valor:

- Identificación de casos de uso de mayor impacto.

- Construcción rigurosa de business cases.

- Definición de métricas y marcos de medición.

- Diseño de estructuras de gobernanza que diferencian programas estratégicos de colecciones de pilotos desconectados.

La pregunta no es “¿qué puede hacer la IA?”, sino:

“¿Qué debería hacer para nosotros y cómo sabremos que está funcionando?”

Pilar 2 — Personas Y Organización: Construir Capacidades Que Perduren

La razón más habitual por la que la IA no escala no es técnica. Es humana.

Los equipos no saben cómo trabajar de forma diferente.

Los managers no saben cómo liderar en entornos híbridos humano-IA.

Los directivos no cuentan con marcos claros para decidir dónde invertir.

Nuestra arquitectura de desarrollo de capacidades cubre toda la organización en tres niveles:

- L100 — AI Fluency: Concienciación amplia: qué es la IA, qué puede y qué no puede hacer, y cómo impacta en cada rol. Es la base. Sin ella, el cambio no se consolida.

- L200 — AI Application: Capacitación práctica basada en roles para managers y responsables de negocio: identificación de casos de uso, rediseño de procesos y liderazgo de la adopción.

- L300 — AI Specialization: Itinerarios avanzados para ‘power users’, ‘champions’ internos y perfiles técnicos que llevan los casos desde concepto hasta producción y consolidan la capacidad a largo plazo.

Un principio clave de nuestro enfoque:

autosuficiencia por encima de dependencia.

No diseñamos programas que requieran soporte externo permanente. Construimos la capacidad interna para que las organizaciones puedan operar, adaptar y escalar por sí mismas.

Pilar 3 — Tecnología Y Datos: La Base Que Permite Avanzar Con Velocidad Y Seguridad

La estrategia y las capacidades necesitan una infraestructura adecuada.

Acompañamos a las organizaciones en el desarrollo de:

- Marcos de gobernanza del dato.

- Estándares de calidad.

- Guardrails de IA responsable

permitiéndolas avanzar de forma rápida y con seguridad, sin introducir nuevos riesgos.

No actuamos como integradores tecnológicos.

Trabajamos desde la perspectiva de negocio y organización, asegurando que las inversiones tecnológicas estén respaldadas por los procesos y capacidades necesarias para generar impacto real.

6. Cómo Trabajamos: Co-Crear En Lugar De Entregar

El modelo tradicional de consultoría en IA sigue siendo, en muchos casos, un modelo de entrega: se construye algo, se transfiere y el proyecto se da por cerrado.

La realidad de lo que suele pasar después es conocida: el traspaso falla, el equipo interno no puede sostenerlo y el piloto no escala.

En Netmind no construimos para las organizaciones. Construimos con ellas. Y desarrollamos sus capacidades para que puedan seguir construyendo sin nosotros.

Cada proyecto se diseña en torno a la co-creación. Nuestros expertos trabajan junto a los equipos internos. La metodología, las herramientas y los marcos de gobernanza se transfieren en tiempo real.

Eso es lo que hace que los resultados sean sostenibles.

Y también lo que convierte la inversión en capacidad en un activo estratégico, no en un coste recurrente.

The Bottom Line

Hoy los mercados dudan de que la mayoría de organizaciones logren capturar valor real de la IA.

Nosotros creemos que se equivocan, que esa predicción solo se cumplirá para quienes la aborden como una herramienta más o como un simple programa formativo y no como una transformación real de cómo se trabaja, cómo se toman decisiones y cómo se genera valor.

Las organizaciones que marcarán la diferencia serán aquellas que desarrollen capacidad organizativa en IA, no solo despliegue tecnológico.

La IA no es solo una herramienta: es una nueva capacidad organizativa.

El verdadero reto ya no es empezar, sino escalar con sentido y estrategia.

lorem ipsum

lorem ipsum

lorem ipsum

lorem ipsum

lorem ipsum

lorem ipsum

lorem ipsum

lorem ipsum

lorem ipsum

.jpg)

Learn how high-velocity talent models help organizations align people, performance and potential in real time.

lorem ipsum

La adopción acelerada de la IA impulsa la innovación, pero también abre la puerta a filtraciones de datos y riesgos de ciberseguridad. En su reciente entrevista para el medio, Isaac Cantalejo advierte del peligro de esta nueva “amenaza” digital

lorem ipsum

El AI Act europeo redefine el uso de la inteligencia artificial en RRHH, clasificando como alto riesgo herramientas de selección, evaluación y promoción. Un nuevo escenario donde integrar la IA de forma ética y segura se convierte en prioridad estratégica.

lorem ipsum

.avif)

.svg)